Articles

Editor’s Picks

Industry News

Pearson’s Aida Marks an Unprecedented Step Forward in AI-Powered Personalized Learning

By Henry Kronk

November 13, 2019

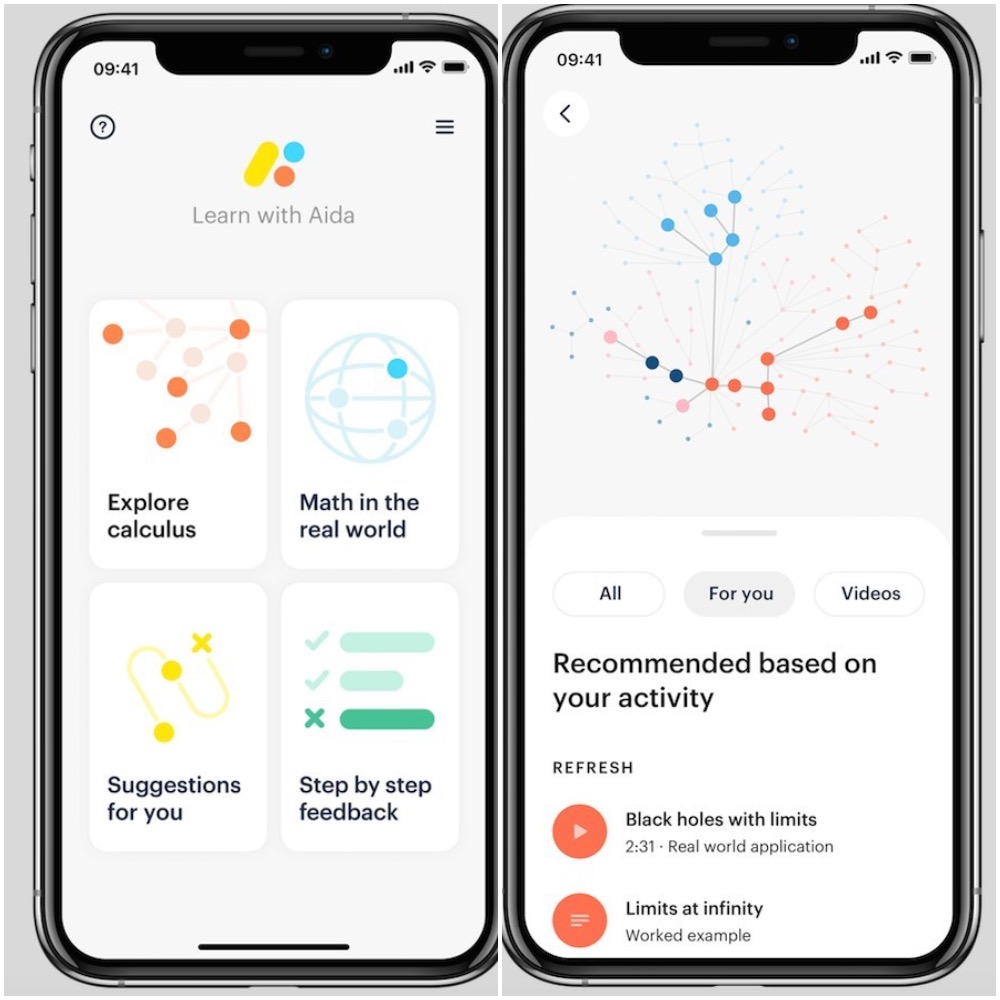

AI-powered personalized learning interventions have been put to use widely in K-12 education. Many focus on discrete elementary subjects, like early literacy and math instruction. On November 12, however, the academic publisher and digital learning provider Pearson pushed the needle further with the release of Aida. Aida is a mobile app that offers personalized calculus instruction.

Aida has numerous features that one might expect to find in a calculus learning app: hundreds of both abstract and real-world how-tos and explainers, some of which are delivered in high-quality videos. The app also tracks learner progress and provides suggestions for what to learn next.

Learning Calculus with Aida

But Aida marks a real step forward with its ability to track and analyze students’ work. With Aida, students can write out their reasoning on a piece of paper, as they would in the classroom or doing homework. They can then take a picture of this work with their phone. Using numerous AI techniques, such as deep learning and reinforcement learning, Aida can then understand that handwriting, analyze a student’s reasoning, and determine whether or not it is correct.

If there are errors in the steps presented to Aida, the app can suggest lessons, and, for last resorts, present students with the correct answer and the steps to achieve it.

“With a lot of the current products out there, what happens is you put your information in the application, and it scores your final answer,” said Pearson VP of AI Product Development Dr. John Behrens. “Many students write on the paper and then type the answer into the computer. So you’re separated from your process, you’re separated from your thinking. And you have to add this whole layer of knowledge to manage that web interface.

“We’ve lowered the cognitive bar to bring the students’ work right to the computing. They don’t have to add all this overhead. They get the work expressed in their own language, they do all the steps, and then they get a record of it and the feedback about it.”

It was also important to Pearson developers that they deliver this product as a mobile app.

“Students today often use mobile phones as their primary device, even though they might be doing homework on desktop or web based systems,” said Pearson VP of Intelligent Systems R&D Dr. Johann Ari Larusson. “But the screen real-estate for mobile devices, of course, is not as extensive as it is on a normal desktop computer, which is why it’s so important to look at all the different aspects that will make the user experience so much richer and easier for the student.”

As Larusson says, this is just the beginning. Pearson has been working to build capacity in AI-delivered instruction and assessment for years. In a 2014 white paper, education researchers Michael Barber and Peter Hill laid out plans to deliver, “instruction that is adjusted on a daily basis to the readiness of each student and that adapts to each student’s specific learning needs, interests and aspirations.”

More recently, Pearson has developed Realize, a learning management system that can integrate personalized instruction on to a single platform. The company has also integrated Realize with Google Classroom.

“This is just the beginning.”

Both Larusson and Behrens wanted to remain focused on the launch of Aida in the interview, and mostly stayed away from discussing possible integrations and more wide-ranging plans for the building of further AI personalized learning capacity. But they did say there will be more to come.

“Calculus isn’t the only stop,” Behrens said. “There’s an expectation that we’re going to building a family of business-to-consumer apps with Aida. There’s plenty of potential for other avenues within Pearson for these technologies.”

“We wanted to make sure that we created enough scaffolding and malleability under the hood to support these features,” Larusson said. “So we really had to think through how to embed this particular feature into the app but also how we curate the data and, more importantly, how we understand what the data means.

“We spent quite a bit of time trying to understand how students work, what are the utensils that they use, what is their balance between writing stuff on paper versus switching to the phone. We needed to start it there to design the schematics of the AI so that it brings down the barriers and combines the physical world, where students scratch out the solutions, to the actual learning world, which is Aida.”

The Data Question

To assess learners and personalize instruction, Aida collects quite a bit of data. Behrens says that data will not be sold or used for marketing purposes. It will, however, be used for the purposes of learning science.

“The role of the data is to promote research,” Behrens said. “We see this as a learning science cycle of getting the data, understanding the learners better, improving the product, and improving our understanding of learning. So we’re very excited about the direction that we’re going in.”

Pearson has, in the past, expressed support of sharing anonymized data sets and open data initiatives to support learning science. In a 2016 Pearson publication titled Intelligence Unleashed: An argument for AI in Education, researchers wrote “we know that the sharing of data is essential to the integration of [AI educational] systems, and that the sharing of anonymized data has the potential to move the field forward by leaps and bounds by cutting back on wasteful duplicative efforts.”

Behrens and Larusson said that Pearson researchers will continue to publish research and present at conferences. But the data generated from Aida will not be made publicly available, and it will not be part of any open data initiatives for the time being.

The App Will Improve Over Time

Outside of the development process, there has not been any efficacy testing of Aida. But Behrens says that Aida is designed to learn and improve over time.

“Aida’s detailed level of capturing process data will be helping us to understand what the students know and don’t know, where their weaknesses are, and how those weaknesses relate to each other. The efficacy program is built in through the data collection, understanding the students, updating the student models, and updating the AI models about student characteristics. Efficacy is built in from the ground up. So it’s not some kind of post-hoc add-on-the-end kind of thing that’s going to happen later. It’s something that’s happening organicly in the design of the application and the ecosystem around it.

Featured Image: Gilles Lamber, Unsplash.

No Comments